发布时间: 2018-02-08 02:42:18

1.1 Flume介绍

1.1.1 概述u Flume是一个分布式、可靠、和高可用的海量日志采集、聚合和传输的系统。

Flume可以采集文件,socket数据包等各种形式源数据,又可以将采集到的数据输出到HDFS、hbase、hive、kafka等众多外部存储系统中

一般的采集需求,通过对flume的简单配置即可实现

Flume针对特殊场景也具备良好的自定义扩展能力,因此,flume可以适用于大部分的日常数据采集场景

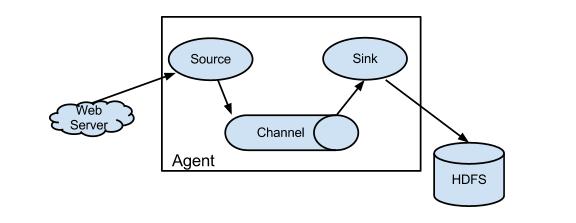

1.1.2 运行机制1、 Flume分布式系统中最核心的角色是agent,flume采集系统就是由一个个agent所连接起来形成

2、 每一个agent相当于一个数据传递员,内部有三个组件:

a) Source:采集源,用于跟数据源对接,以获取数据

b) Sink:下沉地,采集数据的传送目的,用于往下一级agent传递数据或者往最终存储系统传递数据

c) Channel:angent内部的数据传输通道,用于从source将数据传递到sink

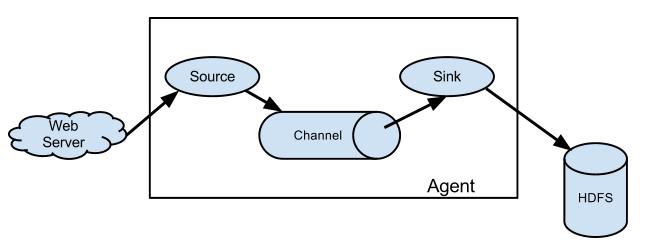

1.1.4 Flume采集系统结构图

1. 简单结构单个agent采集数据

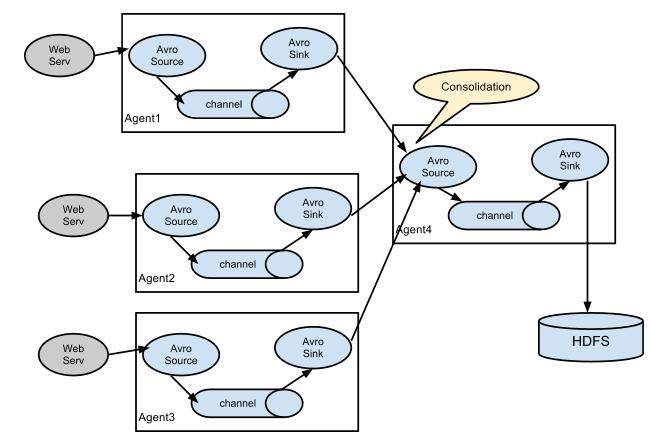

2. 复杂结构多级agent之间串联

1.2 Flume实战案例1.2.1 Flume的安装部署1、Flume的安装非常简单,只需要解压即可,当然,前提是已有hadoop环境 上传安装包到数据源所在节点上 然后解压 tar -zxvf apache-flume-1.8.0-bin.tar.gz 配置环境变量 vi /etc/profile HBASE_HOME=/home/hadoop/apps/hbase ZOOKEEPER_HOME=/home/hadoop/apps/zookeeper HADOOP_HOME=/home/hadoop/apps/hadoop-2.8.1 JAVA_HOME=/opt/jdk1.8.0_121 FLUME_HOME=/home/hadoop/apps/flume PATH=$FLUME_HOME/bin:$HIVE_HOME/bin:$HBASE_HOME/bin:$ZOOKEEPER_HOME/bin:$JAVA_HOME/bin:$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$PATH export FLUME_HOME HIVE_HOME HBASE_HOME ZOOKEEPER_HOME HADOOP_HOME JAVA_HOME PATH USER LOGNAME MAIL HOSTNAME HISTSIZE HISTCONTROL

然后进入flume的目录,修改conf下的flume-env.sh,在里面配置JAVA_HOME export JAVA_HOME=/opt/jdk1.8.0_121 2、根据数据采集的需求配置采集方案,描述在配置文件中(文件名可任意自定义) 3、指定采集方案配置文件,在相应的节点上启动flume agent 先用一个最简单的例子来测试一下程序环境是否正常 1、先在flume的conf目录下新建一个文件 vi netcat-logger.conf

# 定义这个agent中各组件的名字 a1.sources = r1 a1.sinks = k1 a1.channels = c1 # 描述和配置source组件:r1 a1.sources.r1.type = netcat a1.sources.r1.bind = localhost a1.sources.r1.port = 44444 # 描述和配置sink组件:k1 a1.sinks.k1.type = logger # 描述和配置channel组件,此处使用是内存缓存的方式 a1.channels.c1.type = memory a1.channels.c1.capacity = 1000 a1.channels.c1.transactionCapacity = 100 # 描述和配置source channel sink之间的连接关系 a1.sources.r1.channels = c1 a1.sinks.k1.channel = c1

bin/flume-ng agent -c conf -f conf/netcat-logger.conf -n a1 -Dflume.root.logger=INFO,console

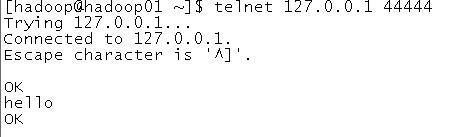

c conf 指定flume自身的配置文件所在目录 -f conf/netcat-logger.con 指定我们所描述的采集方案 -n a1 指定我们这个agent的名字 1、测试 先要往agent采集监听的端口上发送数据,让agent有数据可采 随便在一个能跟agent节点联网的机器上 telnet anget-hostname port (telnet localhost 44444) 如果telnet命令找不到,则用以下方式安装 [root@hdp08 ~]# yum install telnet

1.2.2 采集案例

1、采集指定目录下的日志文件

# Name the components on this agent a1.sources = r1 a1.sinks = k1 a1.channels = c1 # Describe/configure the source #监听目录,spoolDir指定目录, fileHeader要不要给文件夹前坠名 a1.sources.r1.type = spooldir a1.sources.r1.spoolDir = /home/hadoop/flumespool a1.sources.r1.fileHeader = true # Describe the sink a1.sinks.k1.type = logger # Use a channel which buffers events in memory a1.channels.c1.type = memory a1.channels.c1.capacity = 1000 a1.channels.c1.transactionCapacity = 100 # Bind the source and sink to the channel a1.sources.r1.channels = c1 a1.sinks.k1.channel = c1 启动命令: bin/flume-ng agent -c ./conf -f ./conf/spool-logger.conf -n a1 -Dflume.root.logger=INFO,console 2、采集目录到HDFS采集需求:某服务器的某特定目录下,会不断产生新的文件,每当有新文件出现,就需要把文件采集到HDFS中去 根据需求,首先定义以下3大要素 l 采集源,即source——监控文件目录 : spooldir l 下沉目标,即sink——HDFS文件系统 : hdfs sink l source和sink之间的传递通道——channel,可用file channel 也可以用内存channel 配置文件编写: # Name the components on this agent a1.sources = r1 a1.sinks = k1 a1.channels = c1 # Describe/configure the source #监听目录,spoolDir指定目录, fileHeader要不要给文件夹前坠名 a1.sources.r1.type = spooldir a1.sources.r1.spoolDir = /home/hadoop/flumespool a1.sources.r1.fileHeader = true # Describe the sink a1.sinks.k1.type = hdfs a1.sinks.k1.hdfs.path = /flume/events/%y-%m-%d/%H%M/ a1.sinks.k1.hdfs.filePrefix = events- a1.sinks.k1.hdfs.round = true a1.sinks.k1.hdfs.roundValue = 10 a1.sinks.k1.hdfs.roundUnit = minute a1.sinks.k1.hdfs.rollInterval = 3 a1.sinks.k1.hdfs.rollSize = 20 a1.sinks.k1.hdfs.rollCount = 5 a1.sinks.k1.hdfs.batchSize = 1 a1.sinks.k1.hdfs.useLocalTimeStamp = true #生成的文件类型,默认是Sequencefile,可用DataStream,则为普通文本 a1.sinks.k1.hdfs.fileType = DataStream # Use a channel which buffers events in memory a1.channels.c1.type = memory a1.channels.c1.capacity = 1000 a1.channels.c1.transactionCapacity = 100 # Bind the source and sink to the channel a1.sources.r1.channels = c1 a1.sinks.k1.channel = c1 Channel参数解释: capacity:默认该通道中较大的可以存储的event数量 trasactionCapacity:每次较大可以从source中拿到或者送到sink中的event数量 keep-alive:event添加到通道中或者移出的允许时间 3、采集文件到HDFS采集需求:比如业务系统使用log4j生成的日志,日志内容不断增加,需要把追加到日志文件中的数据实时采集到hdfs 根据需求,首先定义以下3大要素 l 采集源,即source——监控文件内容更新 : exec ‘tail -F file’ l 下沉目标,即sink——HDFS文件系统 : hdfs sink l Source和sink之间的传递通道——channel,可用file channel 也可以用 内存channel 配置文件编写: # Name the components on this agent a1.sources = r1 a1.sinks = k1 a1.channels = c1 # Describe/configure the source a1.sources.r1.type = exec a1.sources.r1.command = tail -F /home/hadoop/log/test.log a1.sources.r1.channels = c1 # Describe the sink a1.sinks.k1.type = hdfs a1.sinks.k1.channel = c1 a1.sinks.k1.hdfs.path = /flume/events/%y-%m-%d/%H%M/ a1.sinks.k1.hdfs.filePrefix = events- a1.sinks.k1.hdfs.round = true a1.sinks.k1.hdfs.roundValue = 10 a1.sinks.k1.hdfs.roundUnit = minute a1.sinks.k1.hdfs.rollInterval = 3 a1.sinks.k1.hdfs.rollSize = 20 a1.sinks.k1.hdfs.rollCount = 5 a1.sinks.k1.hdfs.batchSize = 1 a1.sinks.k1.hdfs.useLocalTimeStamp = true #生成的文件类型,默认是Sequencefile,可用DataStream,则为普通文本 a1.sinks.k1.hdfs.fileType = DataStream # Use a channel which buffers events in memory a1.channels.c1.type = memory a1.channels.c1.capacity = 1000 a1.channels.c1.transactionCapacity = 100 # Bind the source and sink to the channel a1.sources.r1.channels = c1 a1.sinks.k1.channel = c1 启动命令: bin/flume-ng agent -c conf -f conf/tail-hdfs.conf -n a1 -Dflume.root.logger=INFO,console

4、采集文件发到另一个agent

从tail命令获取数据发送到avro端口 另一个节点可配置一个avro源来中继数据,发送外部存储 ################## # Name the components on this agent a1.sources = r1 a1.sinks = k1 a1.channels = c1 # Describe/configure the source a1.sources.r1.type = spooldir a1.sources.r1.spoolDir = /home/hadoop/flumespool a1.sources.r1.fileHeader = true # Describe the sink a1.sinks = k1 a1.sinks.k1.type = avro a1.sinks.k1.channel = c1 a1.sinks.k1.hostname = hdp09 a1.sinks.k1.port = 4141 a1.sinks.k1.batch-size = 2 # Use a channel which buffers events in memory a1.channels.c1.type = memory a1.channels.c1.capacity = 1000 a1.channels.c1.transactionCapacity = 100 # Bind the source and sink to the channel a1.sources.r1.channels = c1 a1.sinks.k1.channel = c1

从avro端口接收数据,下沉到logger 采集配置文件,avro-hdfs.conf # Name the components on this agent a1.sources = r1 a1.sinks = k1 a1.channels = c1 # Describe/configure the source a1.sources.r1.type = avro a1.sources.r1.channels = c1 a1.sources.r1.bind = 0.0.0.0 a1.sources.r1.port = 4141 # Describe the sink a1.sinks.k1.type = logger # Use a channel which buffers events in memory a1.channels.c1.type = memory a1.channels.c1.capacity = 1000 a1.channels.c1.transactionCapacity = 100 # Bind the source and sink to the channel a1.sources.r1.channels = c1 a1.sinks.k1.channel = c1 发送数据: $ bin/flume-ng avro-client -H localhost -p 4141 -F /usr/logs/log.10

1.3 更多source和sink组件

Flume支持众多的source和sink类型,详细手册可参考官方文档 http://flume.apache.org/FlumeUserGuide.html

上一篇: {大数据}sqoop数据迁移

下一篇: {大数据}HBase开发